Performing High Pressure Calibrations

- 11 Dec 2020

Performing high-pressure calibration

High-pressure measurement is important to ensure the safety of operators. When performing these calibrations, measurement quality and operator safety are critical.

What is high pressure?

High pressure is a relative term. What some consider to be high, others regard as a normal range. Within the scope of this application note, we define high pressure as any pressure greater than 20 MPa (3000 psi). This threshold is higher than what is available in a standard gas cylinder and is an obvious breakpoint when looking at the variety and availability of different types of pressure reference standards.

Static pressure calibrations are performed at pressures as high as 500 MPa (75 000 psi), with rare applications requiring pressures as high as 1 GPa (150 000 psi). This application note focuses on the lower end of this high pressure regime, but many of the considerations discussed can be extrapolated up to these very high-pressures.

The physics behind pressure calibration

To examine all of the intricacies of high-pressure calibration, we must first understand the physics involved and why the system works the way it does. Ideal Gas Law approximates the behavior of many gasses under different conditions, but with several limitations. For our purposes, it is useful because it describes the variables that affect the pressure in an enclosed system.

Specifically, it states:

PV=nRT

Where: P = Pressure V = Volume n = Number of molecules R = Ideal gas constant T = Temperature

This tells us that pressure is impacted by three things:

• Volume of the system

• Number of molecules in the system

• Temperature of the fluid

The relationship between pressure and volume is inverse; as the volume decreases, the pressure increases. The number of molecules and the temperature have a direct relationship.

What does this mean? To change the pressure in a system, there are three things that can be done:

• Change the volume. An example of this is a screw press or variable volume on a pressure comparator or deadweight tester.

• Change the number of molecules in a system. This is how most gas pressure controllers operate. Additional gas is metered in or exhausted out of the system to increase or decrease the pressure.

• Change the temperature in order to change the pressure. This is a rarely used approach but can be used to stabilize the pressure at a given set point.

The Ideal Gas Law is also useful in understanding why the pressure is unstable. Changes in pressure must align with one or more of the three variables: volume change (expansion or contraction of pressure lines); change in number of molecules (a leak); or temperature effects (as the temperature of the fluid cools, the pressure decreases with it)

Viscosity

Viscosity is the property of a fluid to resist movement or flow. Theoretically, when measuring static pressure, the media should be static and viscosity should not have a significant effect. When working with high pressure, it’s not always that simple. For safety reasons, small tubing is often used for high pressure. If a viscous fluid (like some oils) is used, this could result in excessive time for pressure stability. This is especially important to consider when testing will be performed at different temperatures, as the viscosity of a fluid changes as the temperature changes.

Compressibility

Compressibility is the property of a fluid that dictates how much its volume will change when a pressure is applied. The Ideal Gas Law has already told us the pressure and volume of the system are highly linked. How much the pressure will change with a volume change is defined by the fluid’s compressibility. A gas is highly compressible. If the volume of the system changes, the pressure will not dramatically change with it.

Conversely, liquids are considered incompressible. Therefore, reducing the volume of the system will dramatically increase the pressure. This makes it easier to generate high pressures with liquids than with gas. Head pressure The weight of a column of fluid will generate a pressure on the bottom of the column.

An example of this is a swimming pool; the water pressure is greater at the bottom of the pool than it is on the surface.

Mathematically, this is shown in this equation:

P=hgρ

Where: P = Pressure (absolute) h = height of the fluid column g = acceleration of gravity ρ = density of the fluid If we are measuring the pressure in a system at two different places (reference and test pressures) and these points are at two different vertical heights, then there will be a difference in the pressure caused by the head pressure.

If the pressure medium is a liquid, the density of the fluid is considered a constant with pressure and thus the head pressure is a constant, regardless of the overall pressure of the system. If the pressure medium is a gas, the density is lower but changes with pressure.

At low pressures, a liquid head pressure can be significant in the overall pressure measurement. At higher pressures, the head pressure becomes less significant compared to the overall pressure.

Considerations when performing high-pressure calibrations

Safety

The first thing to consider when performing high-pressure calibrations is safety.

This includes:

- Proper personal protection equipment (PPE), such as the use of safety glasses

- Proper selection of equipment. All pressure boundary equipment (including items like fittings, tubing, valves, and gauges) must be rated for the appropriate maximum working pressure.

- Operator training. The user of the calibration equipment needs to be properly trained in the safe operation of the equipment. This is especially true for manually operated equipment that requires the operator to physically turn screw presses or valves.

- Inclusion of pressure limiting devices like relief valves or burst discs provide a last defense against an over-pressure situation and sensors with large transmitter heads need calibration.

When analyzing the tank size required for your application, be sure to allow for proper sensor immersion depth and fluid space below the sensors and between the sensors being tested and the tank wall.

Fluid selection

What medium should be used when performing pressure calibrations? At the extreme ends of the pressure range, the choices are more obvious. For lower pressures, especially pressures below 7 MPa (1000 psi), a gas medium is most common. The pressures are relatively easy to generate, gas is clean, and it eliminates large head pressure errors. For pressures above 100 MPa (15 000 psi), there are very few equipment choices for using a gas medium and generating the pressure gets difficult. Oil should be used, unless there is is a specific compatibility issue that makes it impossible.

What about all the pressures in between? In calibration laboratory applications, gas media have been commonly used at pressures up to (or even above) 14 MPa (2000 psi) where nitrogen cylinders are readily available. In recent years, the breakpoint between gas and liquid media selection has continued to rise as higher pressure gas cylinders or means for boosting to higher gas pressures become available. The advantages to using gas instead of liquid include a reduced chance of contaminating the device under test (DUT); improved stability time due to lower viscosity; reduction of head pressure errors; and improved options for automated equipment. These advantages have to be weighed against the disadvantages, which include the fact that high-pressure gas has more compressed energy, making it more dangerous if an over-pressure event occurs. Following the safety guidelines given above will help mitigate this risk.

Fluid compatibility

An important consideration in selecting the fluid is to ensure that it is compatible with the devices that will be tested as well as the reference standard. This is no easy task. Most high-pressure measurement devices are used with a liquid medium in their “real world application.” If your calibration system uses a gas medium, then the liquid from the DUT could contaminate the system. One way to eliminate this issue is to use a contamination prevention system. It allows a liquid-filled device to be calibrated using a gas pressure controller without contaminating the pressure controller. Another approach is to use a reference standard that uses liquid as the medium. In this situation, there is still a concern if the reference standard and the DUT use different liquids. For example, a deadweight tester or piston gauge is designed to only operate with a specific fluid. If a different oil than the one provided is used, it may damage or alter the behavior of the piston-cylinder assembly. One workaround is to use a liquid-to-liquid separator.

Pressure stability

To measure pressure properly, the pressure must be sufficiently stable. This can be challenging at high pressures. As discussed previously, the pressure will be unstable due to changes in volume (expansion and contraction of pressure tubing), leaks, and temperature effects. Of these, the biggest challenge is temperature effects. As the pressure in a system increases, the temperature of the fluid also increases. When the pressure is no longer increasing, the temperature will decrease until it reaches equilibrium. As the temperature decreases, the pressure decreases with it. This decrease in pressure may look like a leak, even though no leak exists. A pressure decrease caused by a leak will continue to decrease in pressure with no settling or slowing down. A pressure decrease caused by temperature will eventually settle out if given sufficient time to stabilize. How do we overcome these temperature effects?

- Using a deadweight tester or piston gauge. The floating piston acts as a natural regulator to maintain a stable pressure. As the temperature decreases, the piston sinks, changing the volume of the system. As long as the piston is floating with no friction between the piston and cylinder, the system will be at equilibrium and the pressure will be stable.

- Using a pressure controller. An automated pressure controller actively attempts to maintain a stable pressure by counteracting the temperature effects, either adjusting the number of molecules in the system or changing the volume in the system.

- Using a pressure comparator or similar manual device. This is where temperature effects are most noticeable, as there is nothing to counteract them. For applications where hysteresis is not an issue, increase the pressure past the setpoint and then decrease the pressure. This will balance out the temperature effects and cause the device to stabilize quicker. If this is not feasible, then extended stabilization time may be necessary.

Fitting and tubing selection

When setting up a high-pressure calibration system, there are important considerations when selecting the fittings and tubing to be used. The first is that everything must be properly rated for the pressures in question. All pressure fittings and tubing will be rated for a given maximum working pressure by the manufacturer. It should only be used within the rated pressures. With that in mind, it may seem prudent to get the highest possibly rated pressure tubing and always use it, no matter what pressure you are actually going to generate. There are disadvantages to this approach. Higher pressure tubing normally has a smaller internal diameter. When used with viscous fluids this will increase settling times.

A balance has to be established that ensures proper pressure ratings and flow rates. Care should also be taken when using flexible tubing at high pressures. A pressurized unsecured tube can whip around, causing damage or injury. Make sure all fittings are tight and the system is secure before pressurizing.

High-pressure reference standards

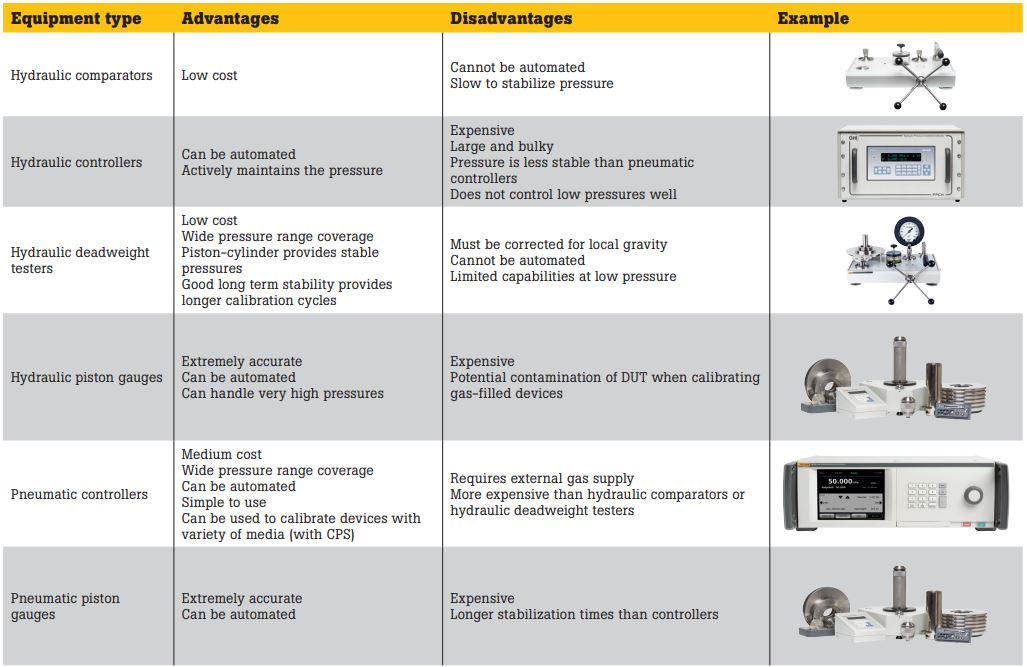

There are a number of different types of reference standards available for high-pressure calibration, each with their own advantages and disadvantages.